Upscaling half resolution screen space effects

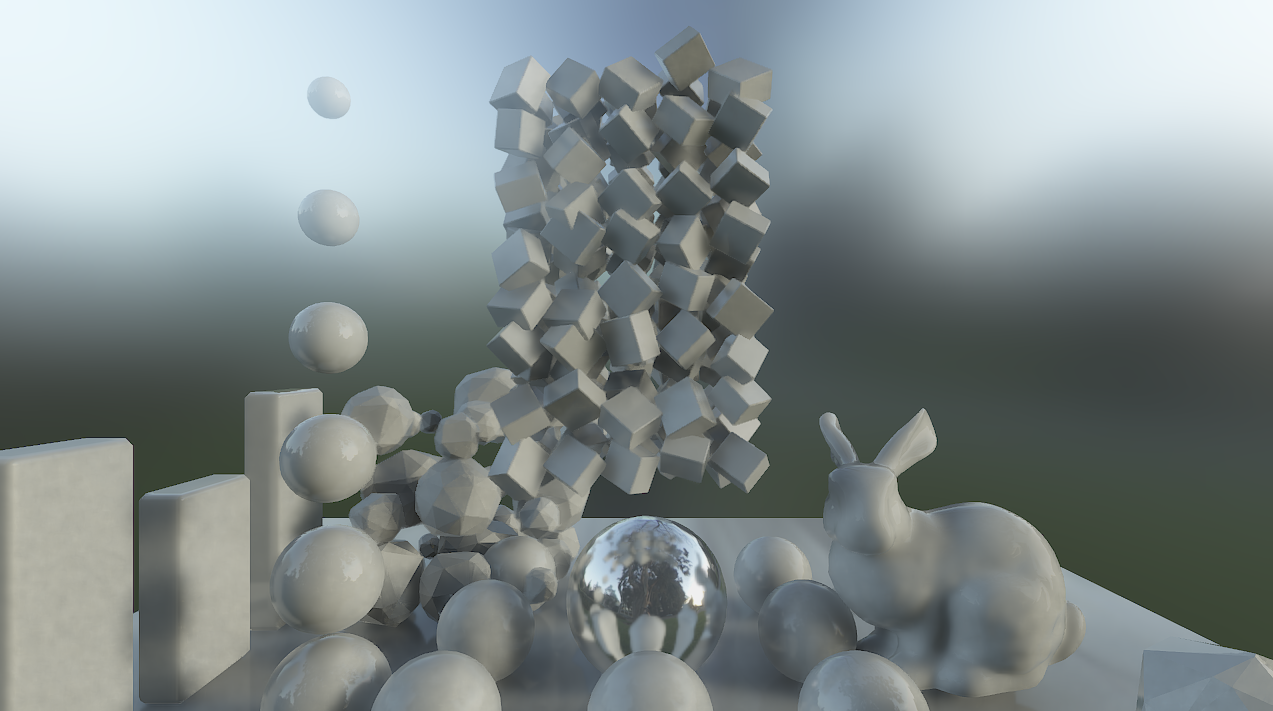

When working with diffuse lighting and ambient occlusion in screen space it is often very tempting to do computations in lower resolution. Most of it is blurry anyway, and for any kind of GI/path tracing, diffuse lighting is undoubtedly the bottleneck. Here is a test scene with all colours set to white and no textures.

Enabling only the diffuse lighting, the image looks strangely familiar.

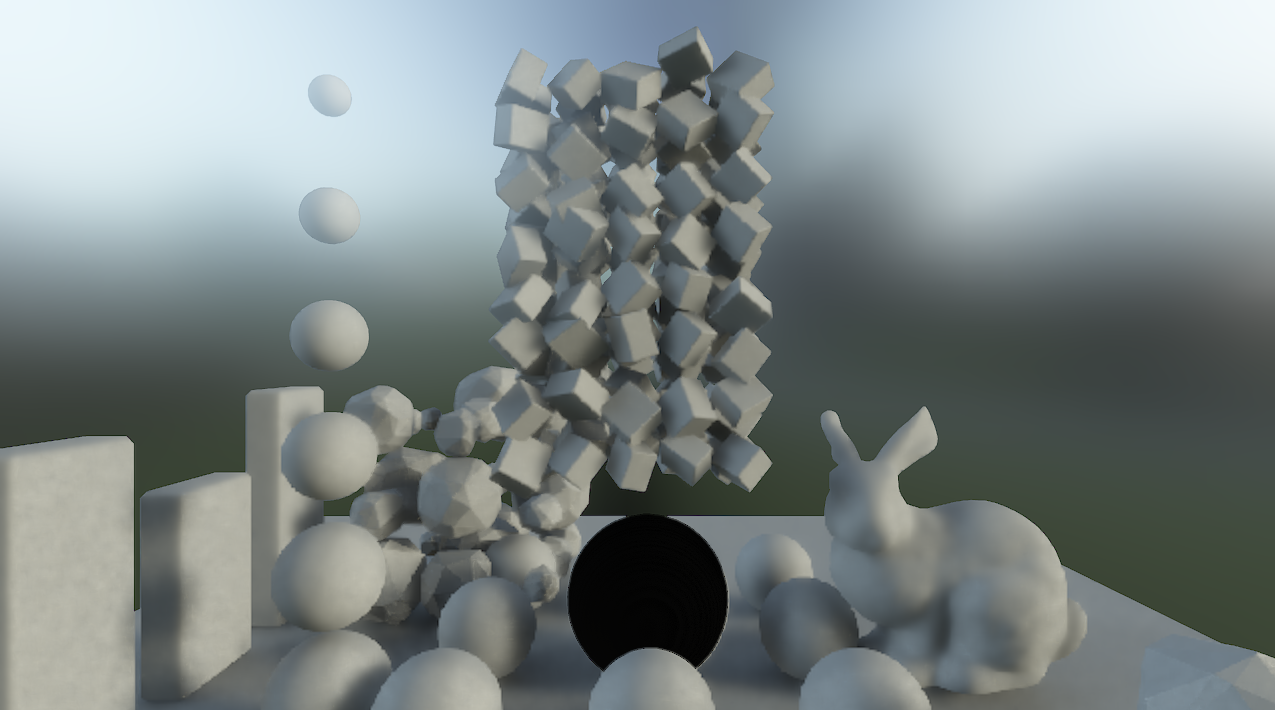

You quickly realise that diffuse lighting is the lion’s share of the entire image. Since everything is the same colour, two overlapping objects can be told apart only because they differ in diffuse lighting. Therefore, lowering the resolution of diffuse lighting also means that a lot of edges will be half resolution and the same diffuse lighting suddenly looks like this.

Not acceptable (click on image to view full resolution), but note that the image looks perfectly fine over larger areas where there are no edges, and also at the contours towards the skybox. I’ve come to think of two solutions to this problem:

1) Render at half resolution. Detect edges and re-render pixels near edges during upsampling. This would probably work very well, but I didn’t try it yet.

2) A cheaper solution would be to cover up faulty pixels on the edges using neighbouring pixels from the same surface (it’s all blurry, remember?), practically retouching the edges much the same way you retouch images in photoshop.

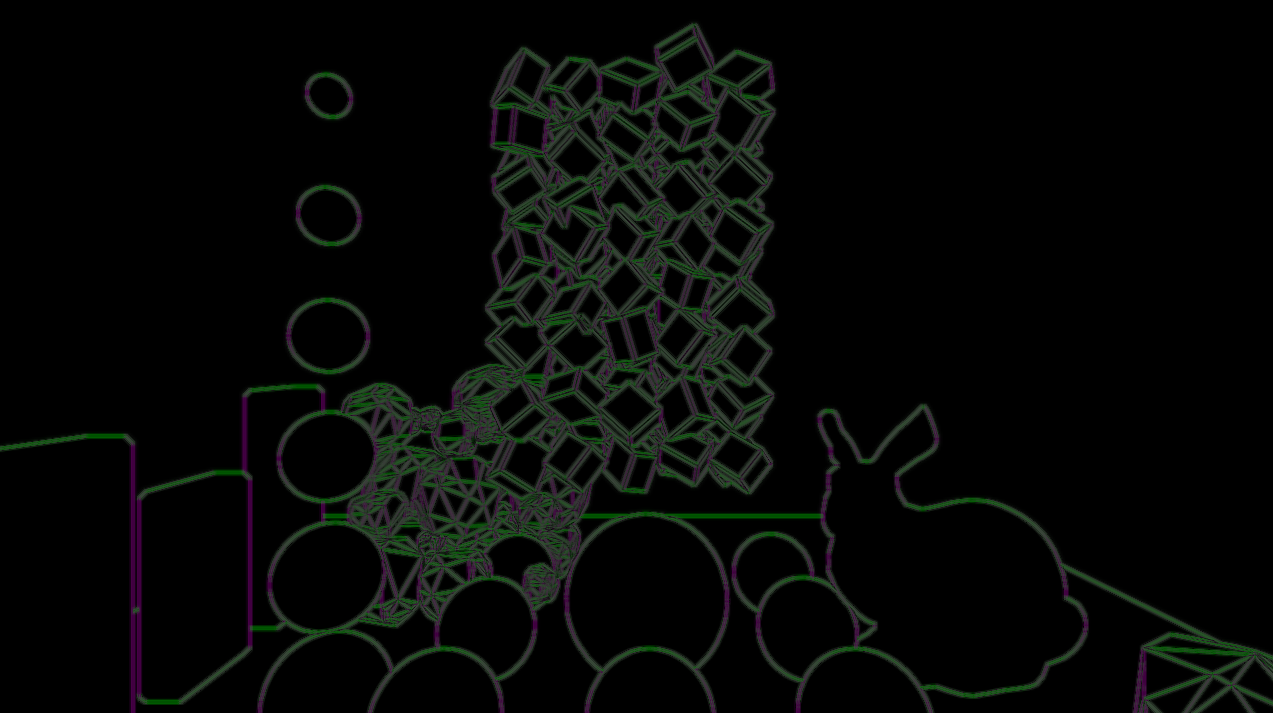

I decided to try the latter and got some interesting results. First I create a 2D “retouching” vector field. It is basically just a distance offset, telling each pixel where to fetch it’s samples. In the middle of a surface this will be (0,0) and near an edge it will point away from the edge. If you have any way of classifying surfaces in a shader this is actually really cheap to do. I just use a unique number for each smoothing group to identify smooth surfaces and for each pixel, I check the eight neighboring pixels, average the offset of the ones that are in the same smoothing group. Ta-da, the average offset will now point in a direction away from each edge, and the retouch vector field looks something like this (here visualized upscaled and with absolute values):

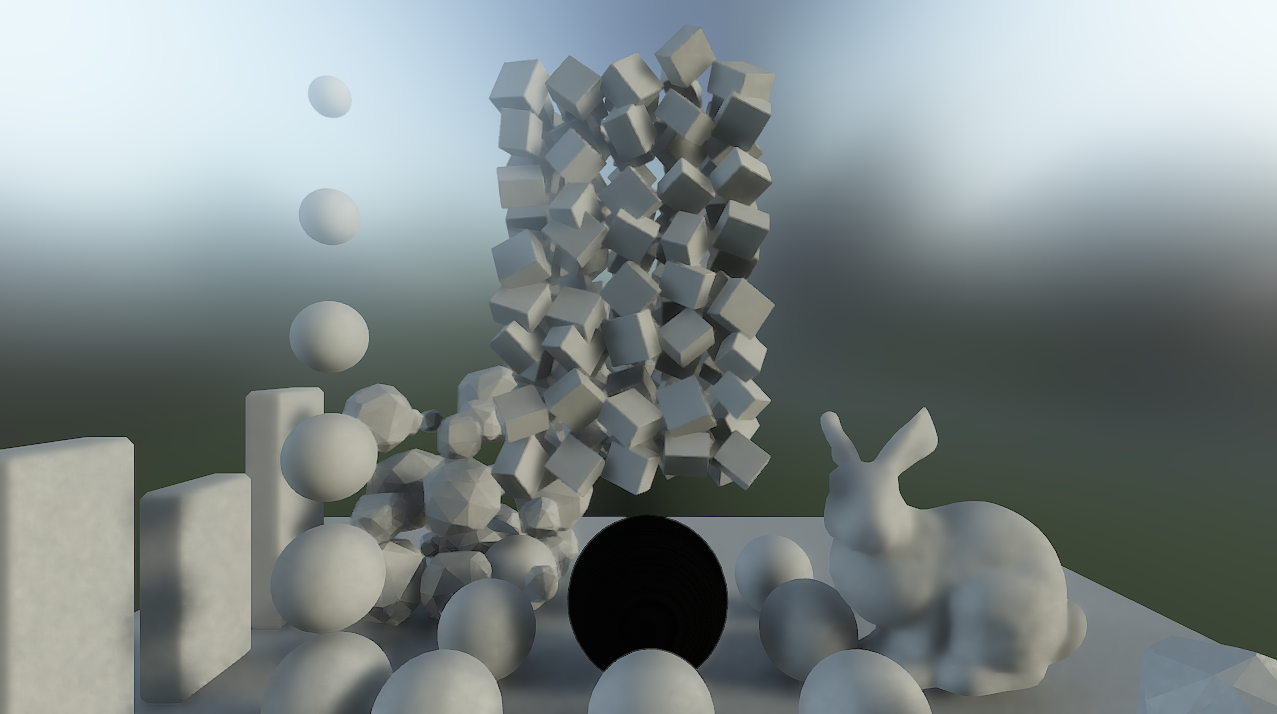

Now if you process the downscaled, half resolution, diffuse lighting through this retouch field during upscaling, the resulting image will magically look like this:

Congratulations, you just saved ~75% processing time for your diffuse lighting. However, there are artifacts, as always. But I found the results to be acceptable in most situations. Computing diffuse lighting in half resolution (quarter pixel count) allowed me to do eight samples per pixel instead of two, resulting in more accurate lighting and less noise.

Another really nice property of the retouch vector field is that once you’ve created it, you can reuse the same field for any screen space upscaling you might do. I for instance reuse the same field when upscaling screen space reflections, and I’m hoping to use it also for smoke particles once I get there.